商品の詳細

✨フォロー割引 開催中!

フォローしていただいた方だけに

お得な割り引きを開催しています!

2000円~3999円 200円OFF

4000円~4999円 300円OFF

5000円~500円OFF

☆まとめ買い⇒さらに200円割引

【フォロー割引希望】【まとめ買い希望】

と購入前にコメントに書いてください^^

#古着屋さっちゃん商品一覧

たくさんのフォローお待ちしております!!

……………………………………………………………

♥商品説明

Sweetsofficeのアウターです(*^^*)

♥カラー

ブラウン

♥素材

本体

ポリエステル47%

毛26%

アクリル25%

ナイロン2%

裏地

ポリエステル100%

ファー部分

アクリル87%

ポリエステル13%

♥サイズcm

肩幅70

身幅51

着丈71

♥状態

中古品ですが美品のためまだまだ着用を楽しめます!

画像7枚目に汚れがございますが、

目立たないのでお気にならない方でしたらお得となっております!

気になることがありましたら、お気軽にコメントください^^

♥発送・発送

購入後、迅速に発送いたします。

丁寧な梱包を心がけています。

☆送料を抑えるために圧縮させて頂きます。

圧縮シワはご了承ください。

※サイズ(着丈等…)については素人採寸のため多少の誤差はお許しください。

☆小物について

写真に含まれるカバンや靴などの小物は、付属しないのでご注意ください。

☆わからないことがあればご質問ください^^

☆コメントなし、即購入、大歓迎です^^

☆購入後できる限り迅速に発送します٩(ᐛ)و

フォローしていただいた方だけに

お得な割り引きを開催しています!

2000円~3999円 200円OFF

4000円~4999円 300円OFF

5000円~500円OFF

☆まとめ買い⇒さらに200円割引

【フォロー割引希望】【まとめ買い希望】

と購入前にコメントに書いてください^^

#古着屋さっちゃん商品一覧

たくさんのフォローお待ちしております!!

……………………………………………………………

♥商品説明

Sweetsofficeのアウターです(*^^*)

♥カラー

ブラウン

♥素材

本体

ポリエステル47%

毛26%

アクリル25%

ナイロン2%

裏地

ポリエステル100%

ファー部分

アクリル87%

ポリエステル13%

♥サイズcm

肩幅70

身幅51

着丈71

♥状態

中古品ですが美品のためまだまだ着用を楽しめます!

画像7枚目に汚れがございますが、

目立たないのでお気にならない方でしたらお得となっております!

気になることがありましたら、お気軽にコメントください^^

♥発送・発送

購入後、迅速に発送いたします。

丁寧な梱包を心がけています。

☆送料を抑えるために圧縮させて頂きます。

圧縮シワはご了承ください。

※サイズ(着丈等…)については素人採寸のため多少の誤差はお許しください。

☆小物について

写真に含まれるカバンや靴などの小物は、付属しないのでご注意ください。

☆わからないことがあればご質問ください^^

☆コメントなし、即購入、大歓迎です^^

☆購入後できる限り迅速に発送します٩(ᐛ)و

商品の説明

2023年最新】588 7の人気アイテム - メルカリ

予約商品】ソフト クラウン 座椅子 バッククッション サポート 豪華 ...

パーティードレス 長袖 結婚式[品番:DSSW0000348]|DRESS+(ドレス ...

フラワー ガーベラ コースター ライトピンク | Francfranc(フラン ...

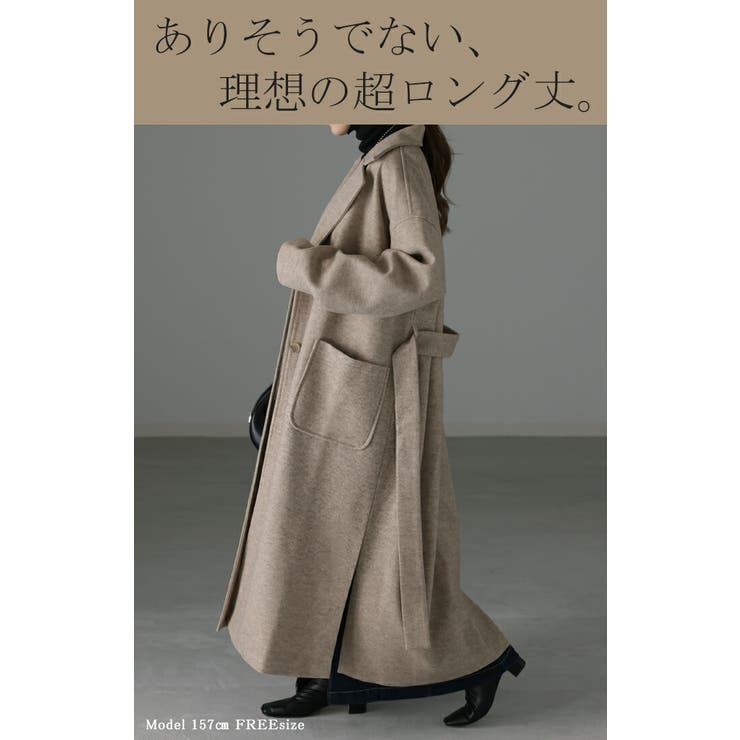

チェスターコート レディース ロングコート アウター 黒 コート[品番 ...

ホット製品 極美品✨ アパルトモン セットアップ ブラウス パンツ ...

殺処分ゼロを目指す「鳴門ねこの会」の活動を広げたい【第4弾 ...

LILY BROWN×MARY QUANT】デイジー刺繍ニットタンク(タンクトップ ...

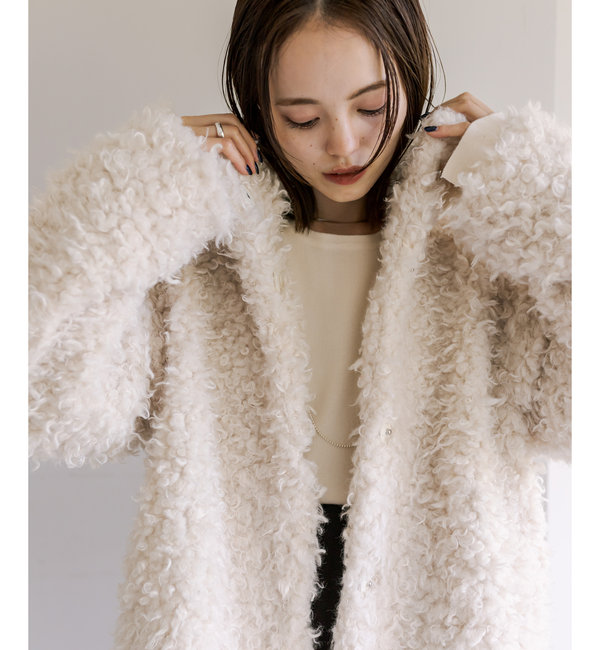

【2サイズ展開】プードルファーコート

大きいサイズ 小さいサイズ 全3色 レディース アウター ファー ...

【2サイズ展開】プードルファーコート

ワンピース レディース ニットワンピース 秋冬 ロングワンピース 長袖 ...

特集掲載!再販13】ひみつの森のシマエナガ 春のいのちの芽吹く森 ...

大きいサイズ 小さいサイズ 全3色 レディース アウター ファー フェイクファー コート ミディアム丈 オーバーサイズ ふわふわ あったか ゆったり 防寒 きれいめ シンプル エレガント 大人かわいい フェミニン 秋 冬 かわ大 かわ小